ALevel Psychology Paper 2

Research Methods 1 Past Questions and Mark

Scheme

Name:

Class:

Time:

Marks:

Comments:

Page 1 of 157

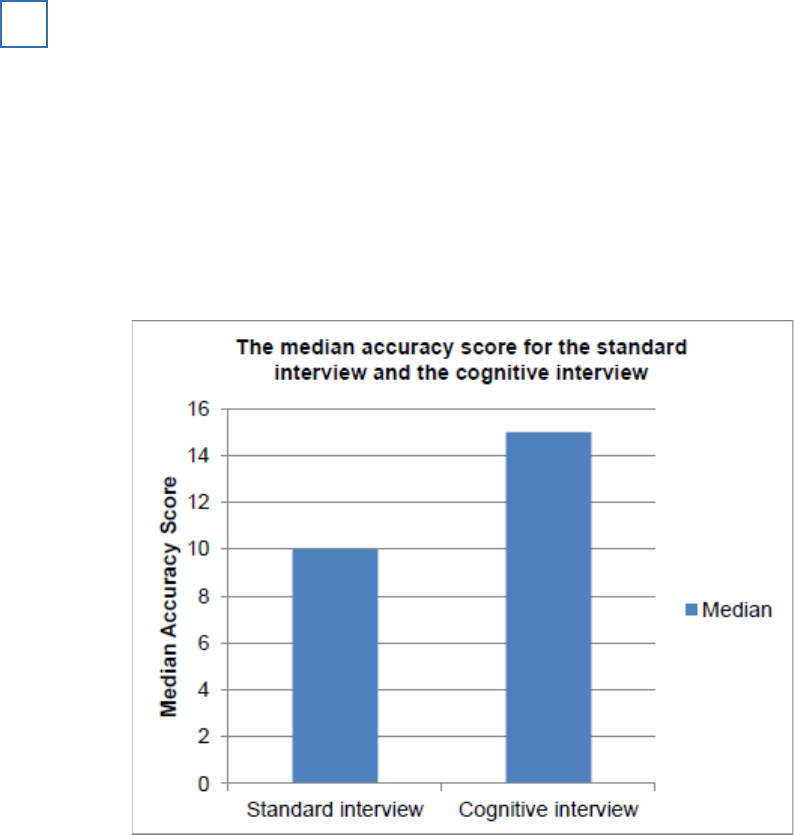

Read the item and then answer the questions that follow.

Participants in an experiment were shown a film of a robbery. The participants were then divided

into two groups. One group was interviewed using a standard interview technique and the other

group was interviewed using the cognitive interview technique. All participants were then given

an ‘accuracy score’ (out of 20) based on how closely their recall matched the events in the film

(20 = completely accurate, 0 = not at all accurate).

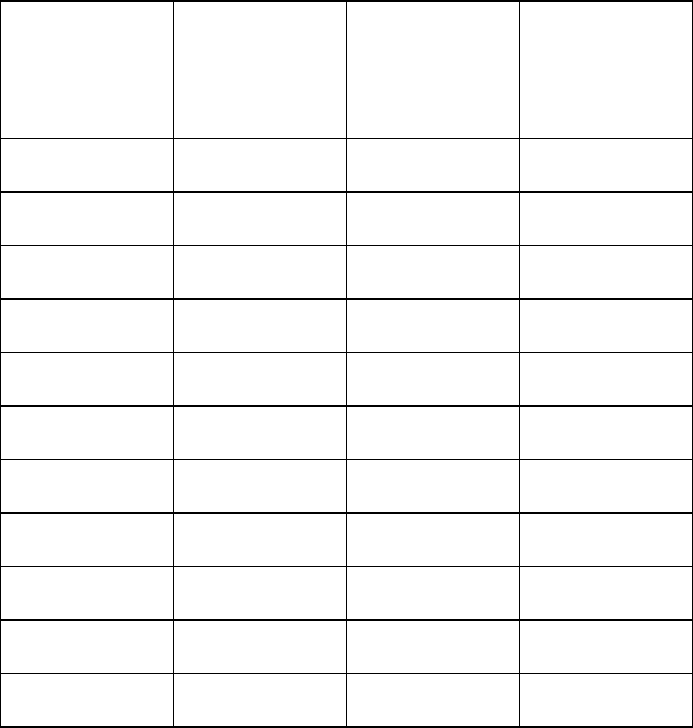

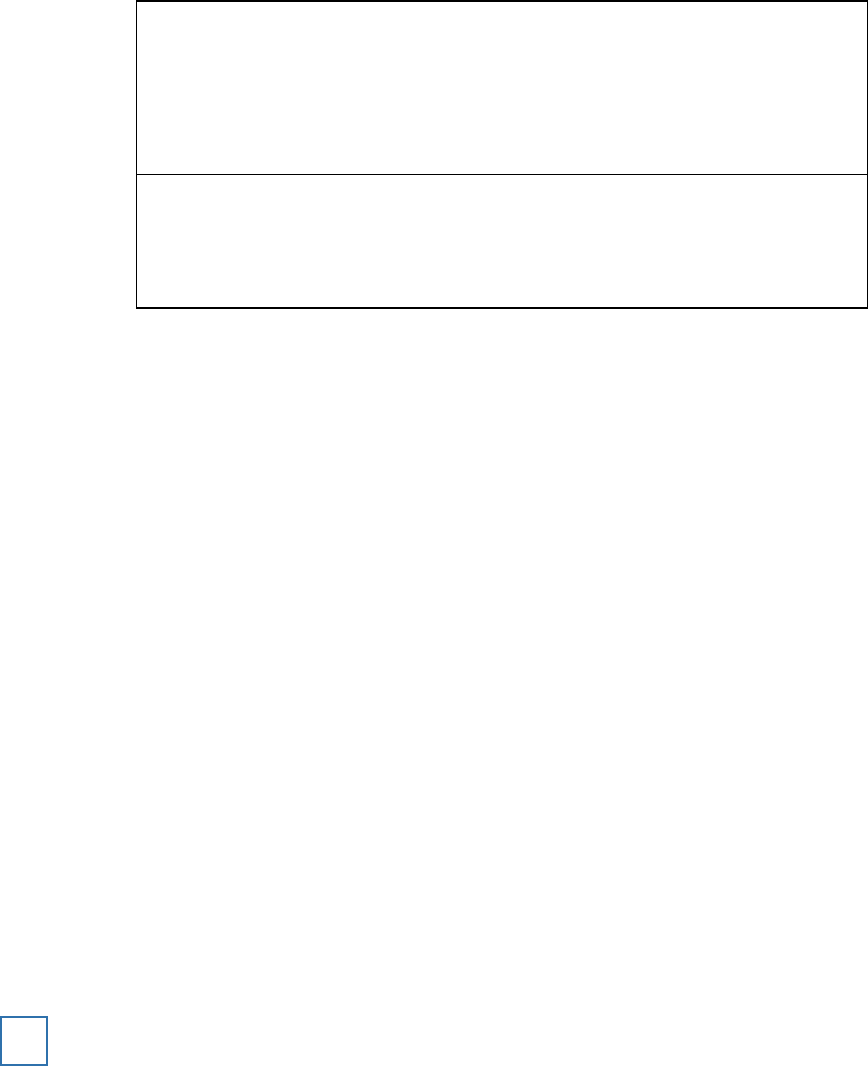

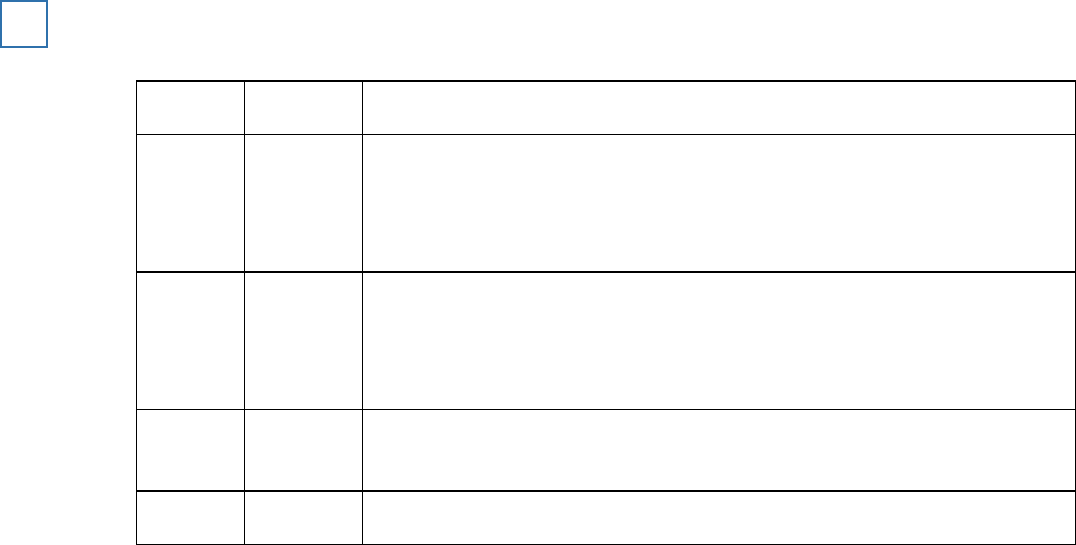

The results of the experiment are shown in the table below.

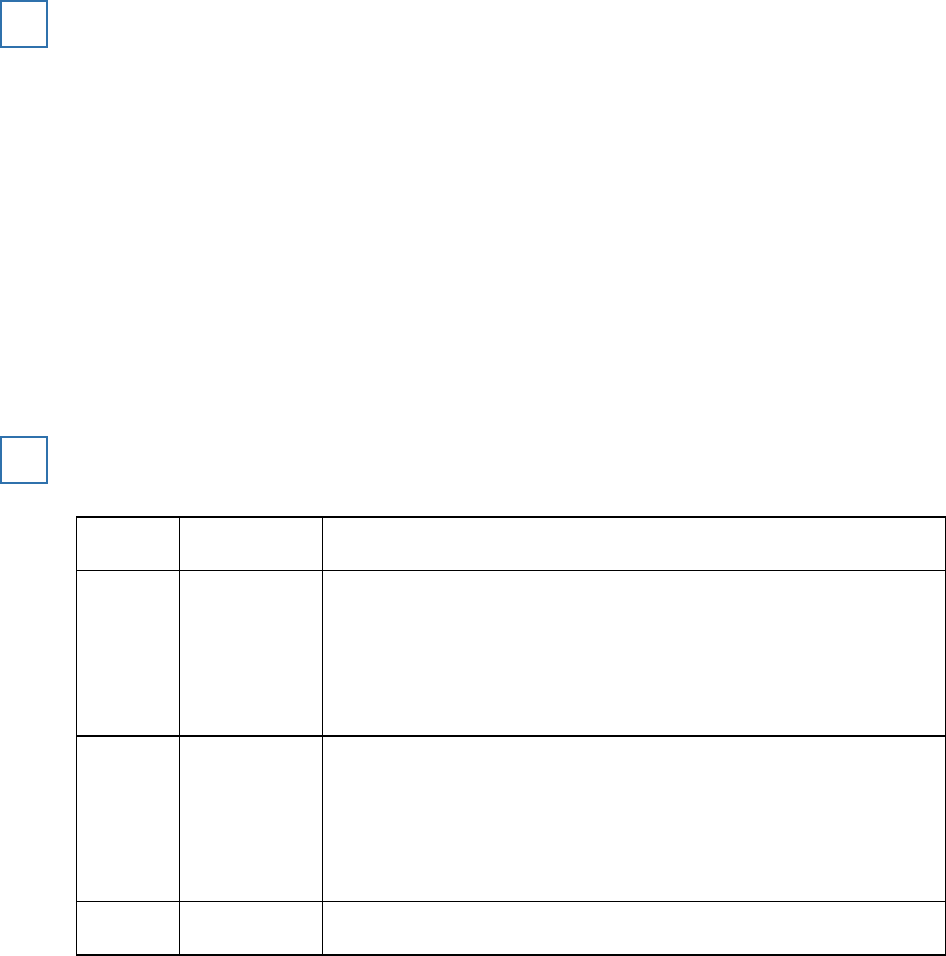

The median accuracy score for the standard interview and the cognitive interview

1

Standard interview

Cognitive interview

Median

10

15

(a) Sketch an appropriate graphical display to show the median accuracy scores in the table

above.

(6)

Page 2 of 157

(b) The experiment used an independent groups design.

Explain how this study could have been modified by using a matched pairs design.

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(4)

(Total 10 marks)

Read the item and then answer the question that follows.

Studies of attachment often involve observation of interactions between mother

and baby pairs like Tasneem and Aisha. Researchers sometimes write down

everything that happens as it takes place, including their own interpretation of

the events.

Explain how such observational research might be refined through the use of behavioural

categories.

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

(Total 4 marks)

2

Page 3 of 157

Read the item and then answer the questions that follow.

A psychologist wanted to see if creativity is affected by the presence of other people. To test this

he arranged for 30 people to participate in a study that involved generating ideas for raising

funds for a local youth club. Participants were randomly allocated to one of two conditions.

Condition A:

there were 15 participants in this condition. Each participant was placed separately

in a room and was given 40 minutes to think of as many ideas as possible for raising funds for a

local youth club. The participant was told to write down his or her ideas and these were collected

in by the psychologist at the end of the 40 minutes.

Condition B:

there were 15 participants in this condition. The participants were randomly

allocated to 5 groups of equal size. Each group was given 40 minutes to think of as many ideas

as possible for raising funds for a local youth club. Each group was told to write down their ideas

and these were collected by the psychologist at the end of the 40 minutes.

The psychologist counted the number of ideas generated by the participants in both conditions

and calculated the total number of ideas for each condition.

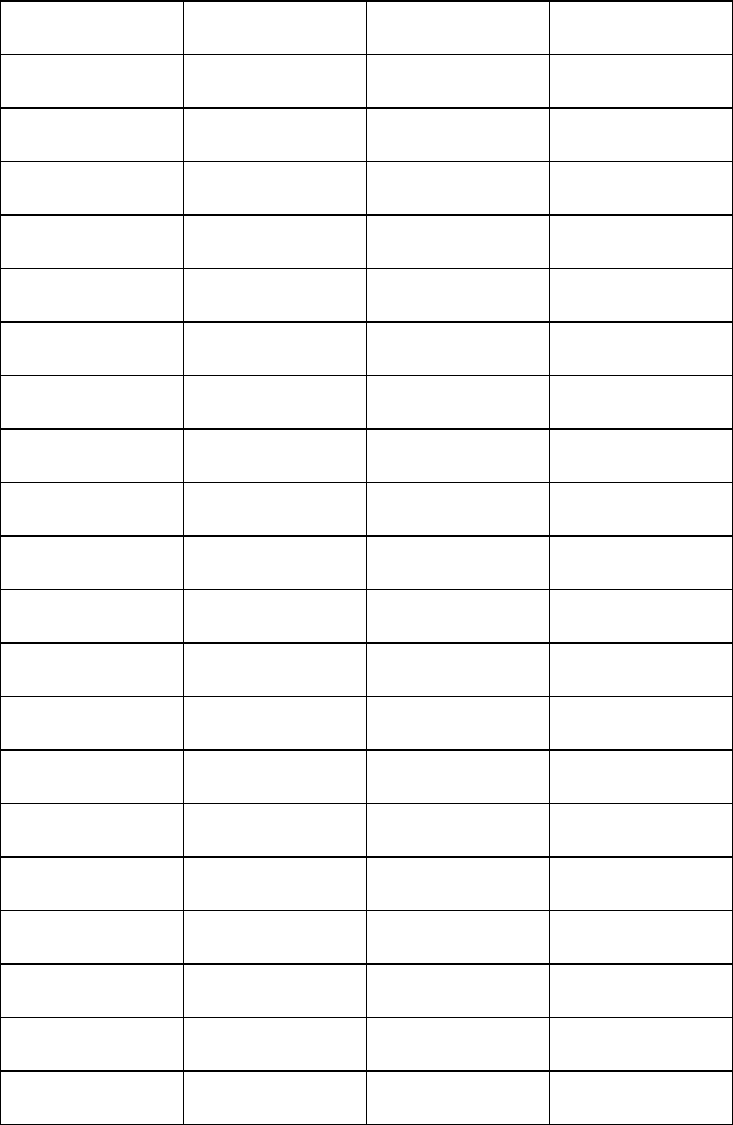

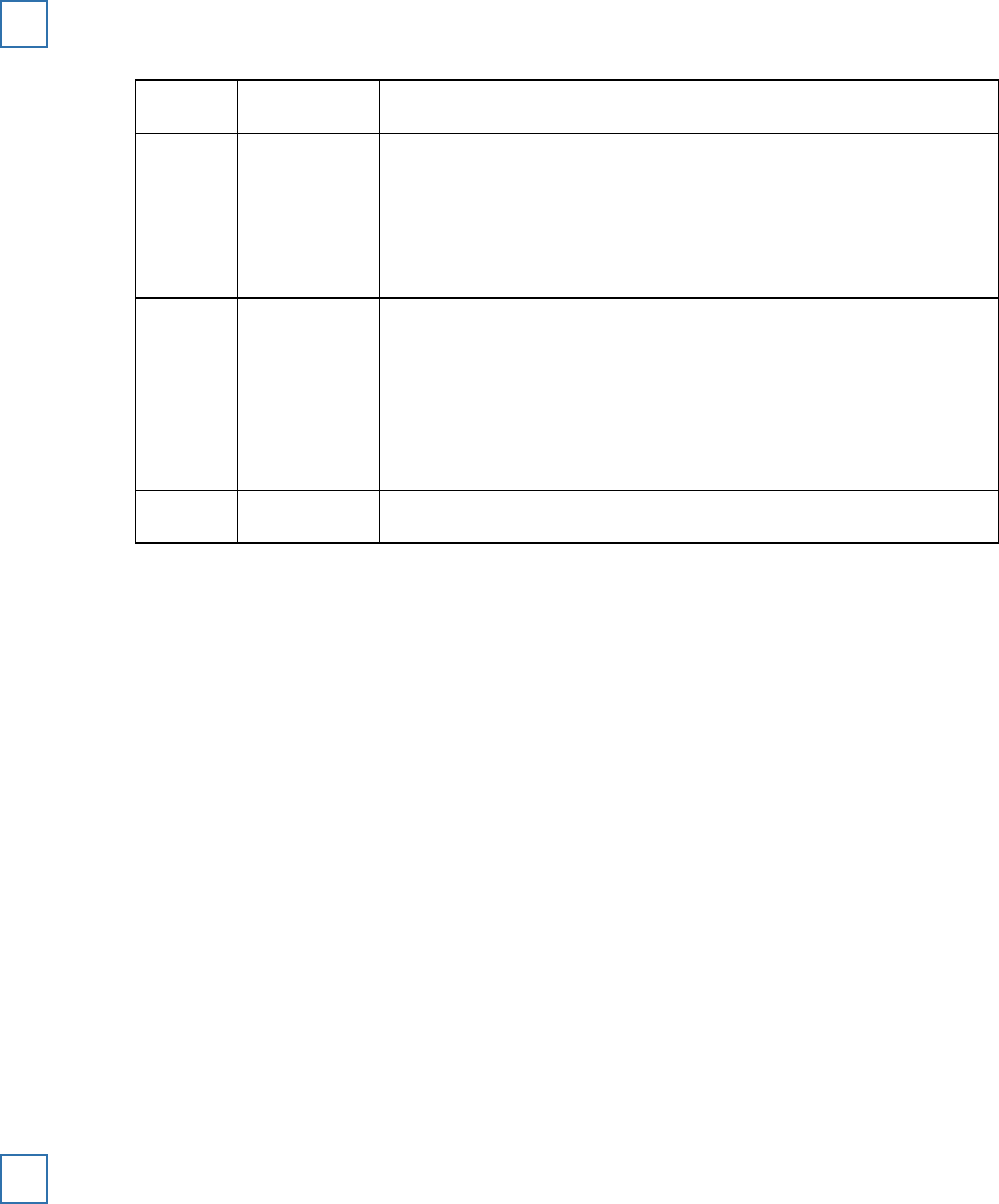

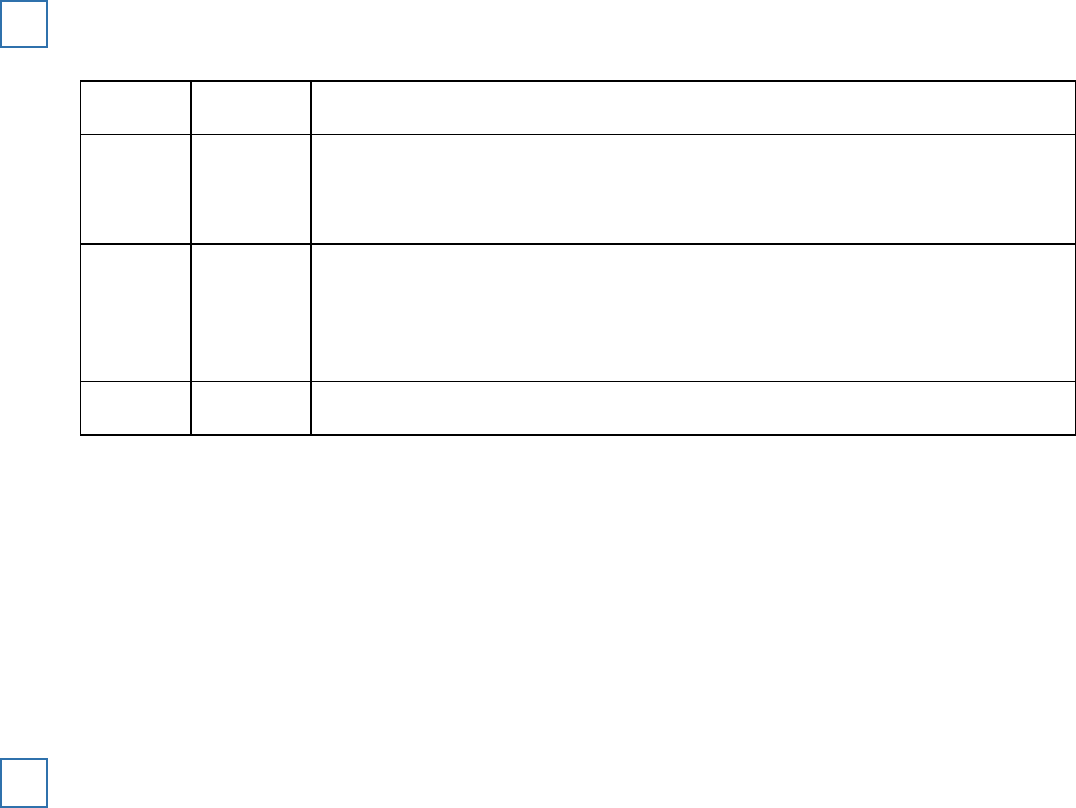

Total number of ideas generated in Condition A (when working alone) and in Condition B

(when working in a group)

3

Condition A

Working alone

Condition B

Working in a group

Total number of

ideas generated

110

75

(a) Identify the experimental design used in this study

and

outline

one

advantage of this

experimental design.

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(3)

Page 4 of 157

(b) Describe

one other

experimental design that researchers use in psychology.

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(2)

(c) Apart from using random allocation, suggest

one

way in which the psychologist might have

improved this study by controlling for the effects of extraneous variables. Justify your

answer.

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(2)

(d) Write a suitable hypothesis for this study.

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(3)

(e) From the information given in the description, calculate the number of participants in each

group in Condition B.

______________________________________________________________

______________________________________________________________

(1)

Read the item and then answer the questions that follow.

The psychologist noticed that the number of ideas generated by each of the

individual participants in

Condition A

varied enormously whereas there was

little variation in performance between the 5 groups in

Condition B.

He

decided to calculate a measure of dispersion for each condition.

Page 5 of 157

(f) Name a measure of dispersion the psychologist could use.

______________________________________________________________

______________________________________________________________

(1)

(g) The psychologist uses the measure of dispersion you have named in your answer to

question (f)

. State how the result for each condition would differ.

______________________________________________________________

______________________________________________________________

(1)

(h) Explain how the psychologist could have used random allocation to assign the 15

participants in

Condition B

into the 5 groups.

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(3)

(i) Using the information given in the table above, explain how the psychologist could further

analyse the data using percentages.

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(2)

Page 6 of 157

(j) At the end of the study the psychologist debriefed each participant. Write a debriefing that

the psychologist could read out to the participants in

Condition A.

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

Extra space

___________________________________________________

______________________________________________________________

______________________________________________________________

(6)

(Total 24 marks)

Read the item and then answer the questions that follow.

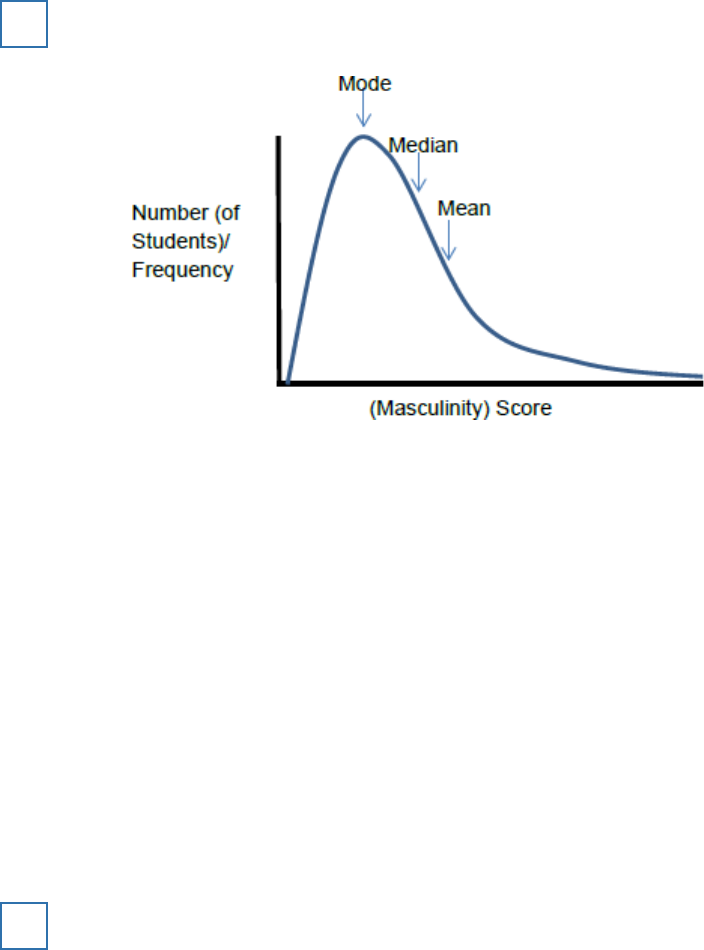

In a study of androgyny, a group of 100 18-year-old students completed a

self-report sex-role inventory. The inventory gave two sets of scores: a

femininity score and a masculinity score. Each set of scores was on a scale of

0–20, with 0 representing no masculinity or no femininity and 20 representing

extreme masculinity or extreme femininity.

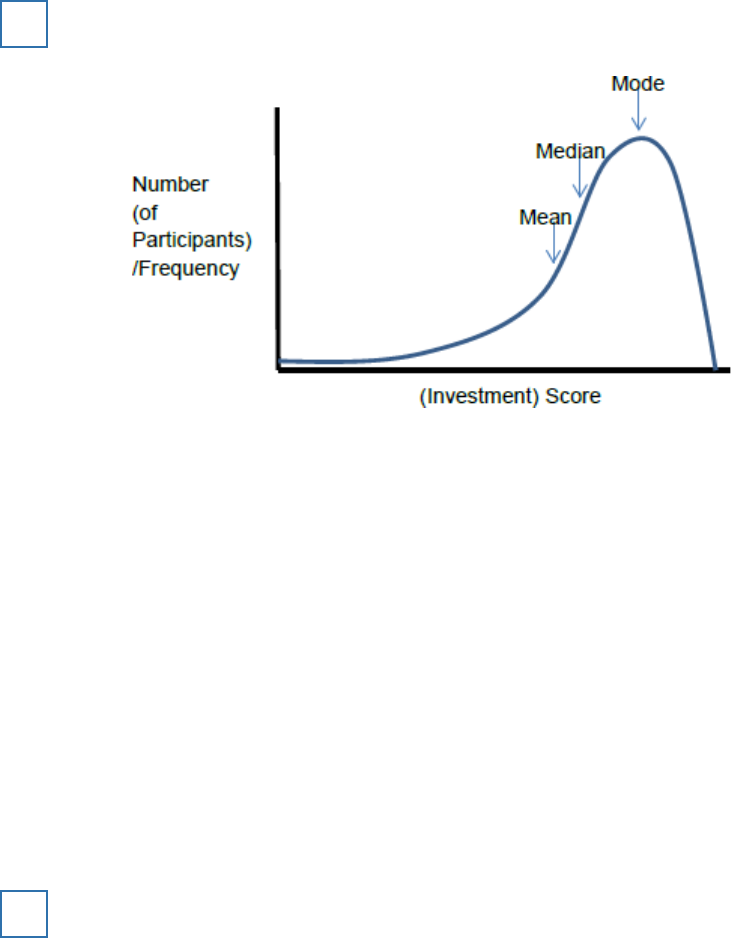

The researchers calculated measures of central tendency for the masculinity

scores. They found that the mean masculinity score was 10.3, the median

masculinity score was 9.5 and the mode masculinity score was 7.

(a) Sketch a graph to show the most likely distribution curve for the masculinity scores in this

study. Label the axes of your graph and mark on it the positions of the mean, median and

mode.

(3)

4

(b) What sort of distribution does your graph show?

(1)

(Total 4 marks)

Page 7 of 157

The following results are percentages of participants who gave the maximum shock, in variations

of Milgram’s experiment into obedience to authority.

Condition

% Participants obeying

Experimenter and two obedient confederates are in the

same room as the participant.

92.5%

Experimenter is in the same room as the participant.

65%

Experimenter is in a different room from the participant.

20.5%

Experimenter and two disobedient confederates are in

the same room as the participant.

10%

5

What do these results suggest about the power of the confederates in variations of Milgram’s

study?

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

Extra space

________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

(Total 4 marks)

Page 8 of 157

Outline

one

strength and

one

weakness of using correlations in stress research.

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

Extra space

________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

(Total 4 marks)

6

A psychologist carried out a research study to investigate the effects of institutional care. To do

this, she constructed a questionnaire to use with 100 adults who had spent some time in an

institution when they were children.

She also carried out interviews with ten of the adults.

(a) For this study, explain

one

advantage of collecting information using a questionnaire.

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(3)

7

Page 9 of 157

(b) In this study, the psychologist collected some qualitative data. Explain what is meant by

qualitative data.

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(2)

(c) Write

one

suitable question that could be used in the interviews to produce qualitative data.

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(2)

(d) Identify

two

ethical issues that the psychologist would need to consider in this research.

Explain how the psychologist could deal with

one

of these issues.

Ethical Issue 1

_________________________________________________

______________________________________________________________

Ethical Issue 2

_________________________________________________

______________________________________________________________

How the psychologist could deal with one of these issues _______________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(5)

(Total 12 marks)

Page 10 of 157

A researcher studied the effect of context on memory. He used an independent groups design.

He tested participants in one of two conditions.

In

Condition 1

, a group of 20 schoolchildren learned a list of 40 words in a classroom. This

group then recalled the words in the same classroom.

In

Condition 2

, a different group of 20 schoolchildren learned the same list of 40 words in

a classroom. This group then recalled the words in the school hall.

The researcher recorded the results and compared the mean number of words recalled in

each condition.

8

(a) Identify the independent variable in this study.

(1)

(b) Use your knowledge of retrieval failure to explain the likely outcome of this study.

(3)

(c) In this study, participants were randomly allocated to one of the two conditions. Explain how

this might have been carried out.

(2)

(d) In this study, the researcher used an independent groups design. The researcher decided

to repeat the study with different participants and to use a matched pairs design.

Explain how these participants could be matched and then allocated to the conditions.

(2)

(Total 8 marks)

The report was subjected to peer review before it was published in a journal.

What is meant by peer review?

(Total 2 marks)

9

Page 11 of 157

Dave, a middle-aged male researcher, approached an adult in a busy street. He asked the adult

for directions to the train station. He repeated this with 29 other adults.

Each of the 30 adults was then approached by a second researcher, called Sam, who showed

each of them 10 photographs of different middle-aged men, including a photograph of Dave. Sam

asked the 30 adults to choose the photograph of the person who had asked them for directions

to the train station.

Sam estimated the age of each of the 30 adults and recorded whether each one had correctly

chosen the photograph of Dave.

(a) Identify

one

aim of this experiment.

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(2)

10

(b) Suggest

one

reason why the researchers decided to use a field experiment rather than

a laboratory experiment. .

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(2)

(c) Name the sampling technique used in this experiment. Evaluate the choice of this sampling

technique in this experiment.

Sampling technique

____________________________________________

Evaluation

____________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

Page 12 of 157

Extra space

___________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(4)

(d) Identify

one

possible extraneous variable in this experiment. Explain how this extraneous

variable could have affected the results of this experiment.

Extraneous variable

____________________________________________

How this extraneous variable could have affected the results of this experiment

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

Extra space

___________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(4)

(Total 12 marks)

A psychology student was asked to design an investigation to see whether taking exercise could

increase feelings of happiness. She proposed to do an experiment. She decided to recruit a

sample of volunteers who had just joined a gym, by putting up a poster in the gym. She planned

to carry out a short interview with each volunteer and to give each one a happiness score. She

intended to interview the volunteers again after they had attended the gym for six weeks and to

reassess their happiness score to see if it had changed.

The psychology student’s teacher identified a number of limitations of the proposed experiment.

Explain

one or more

limitations of the student’s proposal

and

suggest how the investigation

could be improved.

(Total 10 marks)

11

Page 13 of 157

A group of researchers conducted a survey about helping behaviour. They asked an opportunity

sample of 200 university students to complete a questionnaire. The questionnaire contained

open and closed questions. The following are examples of questions used in the questionnaire:

A

Do you think that you are generally a helpful person? Yes No

B

What do you think most people would do if they were driving in the rain and saw a woman

standing alone next to her broken-down car?

C

How would you react if someone walking in front of you slipped and fell over?

12

(a) Identify an open question from

A

,

B

or

C

above. Give

one

advantage of using open

questions.

Example of open question (write

A

,

B

or

C

) ________

(1)

Advantage _____________________________________________________

______________________________________________________________

______________________________________________________________

(1)

The researchers then categorised the responses given to question

C

above.

The results are shown in

Table 1

.

Table 1: The number of participants who gave the following responses to question C

Help the person

Ignore the person

Laugh at the person

Other reactions

137

23

31

9

Page 14 of 157

(b) What conclusion might the researchers draw from the responses given in

Table 1

above?

Justify your answer.

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(2)

On the basis of the responses to question

C

, the researchers decided to conduct a further

investigation. The aim was to see whether an individual’s helping behaviour might be

affected by the presence of other people.

The participants were an opportunity sample of 40 first-year students. The students were

told that they would be interviewed about university life. Each student was met by an

interviewer and asked to wait. The interviewer then went into the next room. After two

minutes there was a loud noise and a cry of pain from the next room.

Twenty participants took part in

Condition 1

and the other 20 participants took part in

Condition 2

.

Condition 1

Each participant waited alone.

Condition 2

Each participant waited with another person who had previously been told by

the researchers not to react to the sounds from the next room.

The researchers counted the number of participants in each condition who went to help the

interviewer in the next room.

(c) Write a suitable experimental hypothesis for the further investigation.

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(2)

Page 15 of 157

(d) Suggest

one

extraneous variable that might be present in the further investigation.

Explain why this variable should be controlled and how it could be controlled.

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(3)

(e) Identify the experimental design used in the further investigation. Explain why this is a

suitable experimental design for this study.

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(3)

Page 16 of 157

(f) Explain how random sampling might have been used to select the participants in the further

investigation.

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(2)

The results of the further investigation are given below.

Table 2: Number of participants who went to help the interviewer in Condition 1 and

Condition 2

Condition 1

(Participant waiting alone)

Condition 2

(Participant waiting with another person)

20

9

(g) Suggest a suitable graphical display that could be used to represent the data in

Table 2

.

Justify your choice.

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(2)

Page 17 of 157

(h) After the further investigation, the researchers debriefed the participants. Discuss

two

points that the researchers should have included when they debriefed the participants.

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(4)

(Total 20 marks)

The report was subjected to peer review before it was published in a journal.

Explain why peer review is an important aspect of the scientific process.

(Total 4 marks)

13

Some studies have suggested that there may be a relationship between intelligence and

happiness. To investigate this claim, a psychologist used a standardised test to measure

intelligence in a sample of 30 children aged 11 years, who were chosen from a local secondary

school. He also asked the children to complete a self-report questionnaire designed to measure

happiness. The score from the intelligence test was correlated with the score from the happiness

questionnaire. The psychologist used a Spearman’s rho test to analyse the data. He found that

the correlation between intelligence and happiness at age 11 was +0.42.

(a) Write an operationalised non-directional hypothesis for this study.

(2)

14

(b) Identify an alternative method which could have been used to collect data about happiness

in this study. Explain why this method might be better than using a questionnaire.

(4)

(c) A Spearman’s rho test was used to analyse the data. Give

two

reasons why this test was

used.

(2)

Extract from table of critical values from Spearman’s rho(r

s

) test

Page 18 of 157

N (number of participants)

Level of significance for a two-tailed test

0.10

0.05

Level of significance for a one-tailed test

0.05

0.025

29

0.312

0.368

30

0.306

0.362

31

0.301

0.356

Calculated r

s

must equal or exceed the table (critical) value for significance at the level shown.

(d) The psychologist used a non-directional hypothesis. Using the table above, state whether

or not the correlation between intelligence and happiness at age 11 (+0.42) was significant.

Explain your answer.

(3)

(e) Five years later, the same young people were asked to complete the intelligence test and

the happiness questionnaire for a second time. This time the correlation was –0.29.

With reference to

both

correlation scores, outline what these findings seem to show about

the link between intelligence and happiness.

(4)

(Total 15 marks)

A researcher believed that there is a biological basis to aggression in males. She predicted that

there would be a significant difference between the levels of the hormone testosterone in

aggressive males and the levels of the hormone testosterone in non-aggressive males. In order

to test her prediction, the researcher statistically analysed the levels of testosterone in saliva

samples from 20 aggressive males and 20 non-aggressive males.

Outline

three

ways in which the study described above could be considered to be scientific.

(Total 3 marks)

15

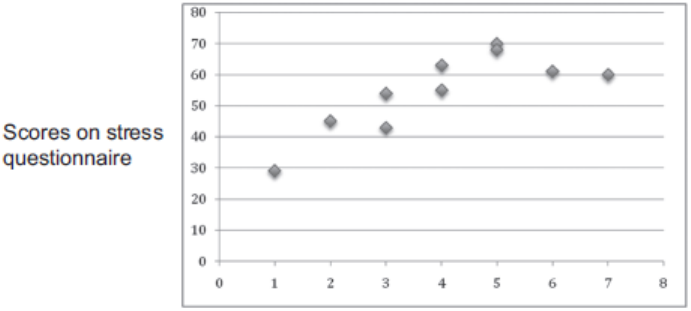

Research has shown that there is a relationship between stress and illness.

The figure below shows the number of days off work through illness in a year and scores on a

stress questionnaire, where a high score indicates more stress.

Relationship between days off work in a year

through illness and stress scores

16

Page 19 of 157

Number of days off work in a year through illness

What does the figure above tell you about the relationship between stress and illness?

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

Extra space

________________________________________________________

___________________________________________________________________

(Total 2 marks)

Page 20 of 157

A researcher used content analysis to investigate how the behaviour of young children changed

when they started day care.

He identified a group of nine-month-old children who were about to start day care.

He asked the mother of each child to keep a diary recording her child’s behaviour every day for

two weeks before and for two weeks after the child started day care.

(a) Explain how the researcher could have used content analysis to analyse what the mothers

had written in their diaries.

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

Extra space

___________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(4)

17

Page 21 of 157

(b) Explain

one or more

possible limitations of this investigation.

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

Extra space

___________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(4)

(Total 8 marks)

A psychologist used an independent groups design to investigate whether or not a cognitive

interview was more effective than a standard interview, in recalling information. For this

experiment, participants were recruited from an advertisement placed in a local paper. The

advertisement informed the participants that they would be watching a film of a violent crime and

that they would be interviewed about the content by a male police officer.

The psychologist compared the mean number of items recalled in the cognitive interview with the

mean number recalled in the standard interview.

(a) Name the sampling technique used in this experiment.

______________________________________________________________

______________________________________________________________

(1)

18

(b) Suggest

one

limitation of using this sampling technique.

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(2)

Page 22 of 157

(c) Identify the independent variable

and

the dependent variable in this experiment.

Independent variable

__________________________________________

______________________________________________________________

Dependent variable

____________________________________________

______________________________________________________________

(2)

(d) Explain

one

advantage of using an independent groups design for this experiment.

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(2)

(e) Discuss whether or not the psychologist showed an awareness of the British Psychological

Society (BPS) Code of Ethics when recruiting participants for this experiment.

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(3)

(Total 10 marks)

Briefly outline

two

problems that might arise when making generalisations on the basis of

psychological research findings.

(Total 4 marks)

19

Page 23 of 157

(a) One technique used in cognitive interviews is ‘report everything’. When using this

technique, the police officer in this investigation read the following instructions to the

participants:

“Please tell me everything you can remember about what you saw in the film. Do not leave

anything out, even the small details you think may be unimportant.”

Identify

one other

technique which could have been used by the police officer in this

cognitive interview. Write down the instructions that he could have read out to the

participants.

Technique

____________________________________________________

______________________________________________________________

Instructions to participants

______________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(3)

20

(b) The psychologist also recorded the number of correct items recalled and the number of

incorrect items recalled in each type of interview. The following results were obtained:

Cognitive Interview

Standard Interview

Mean number of correct

items recalled

45

32

Mean number of incorrect

items recalled

8

8

From these results, what might the psychologist conclude about the effectiveness of

cognitive interviews?

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(2)

(Total 5 marks)

Page 24 of 157

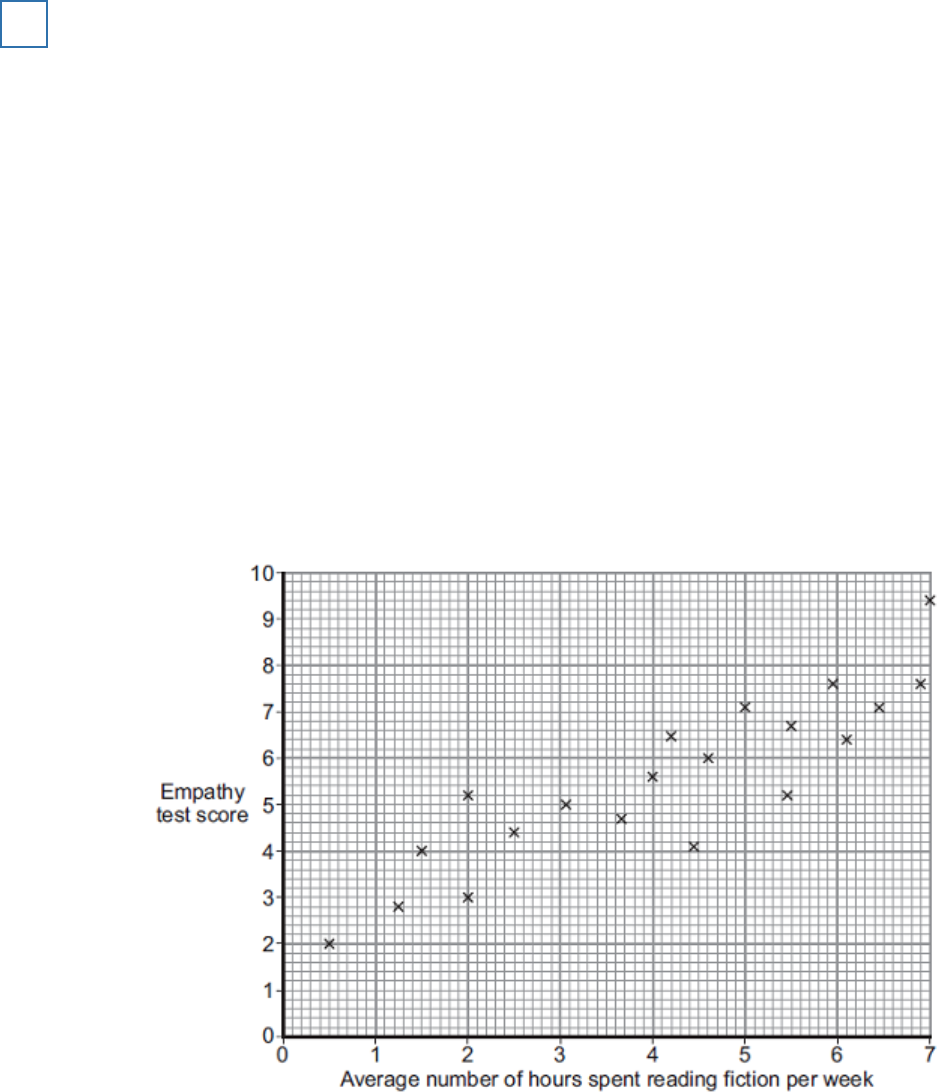

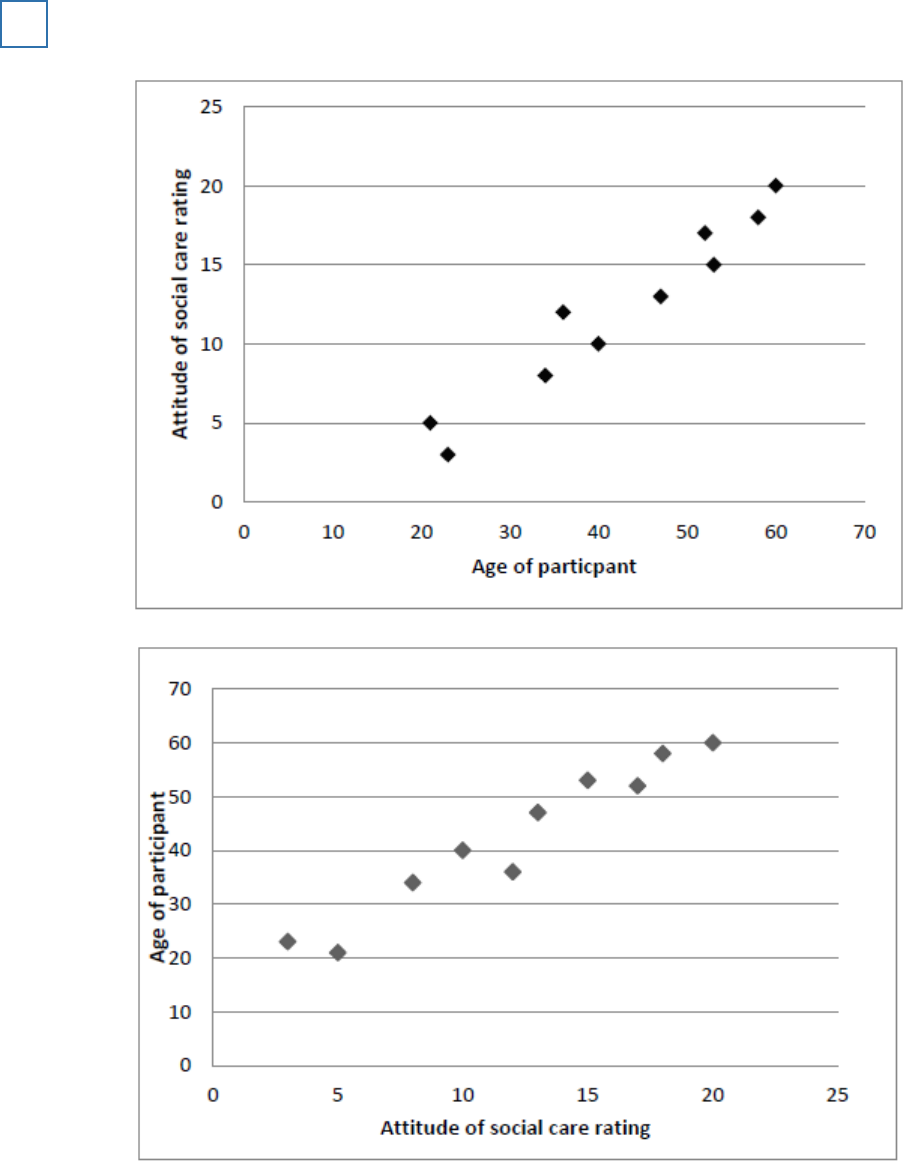

A student teacher was interested in the relationship between empathy (consideration and

feelings for others) and the time spent reading fiction. She decided to investigate whether or not

such a relationship was present in children.

The student teacher designed her own questionnaire to measure empathy in 8-year-old children.

The higher the score achieved, the greater the empathy. Twenty children, all from one school,

took part. Each child completed the questionnaire individually.

21

The student teacher designed another questionnaire to measure ‘time spent reading fiction’.

Each child was given this questionnaire to take home and complete with his or her parents over a

four-week period. ‘Time spent reading fiction’ included the time spent by parents reading to the

child as well as the time the child spent reading independently. Using the responses to this

questionnaire, the student teacher calculated how much time per week, on average, each child

spent reading fiction.

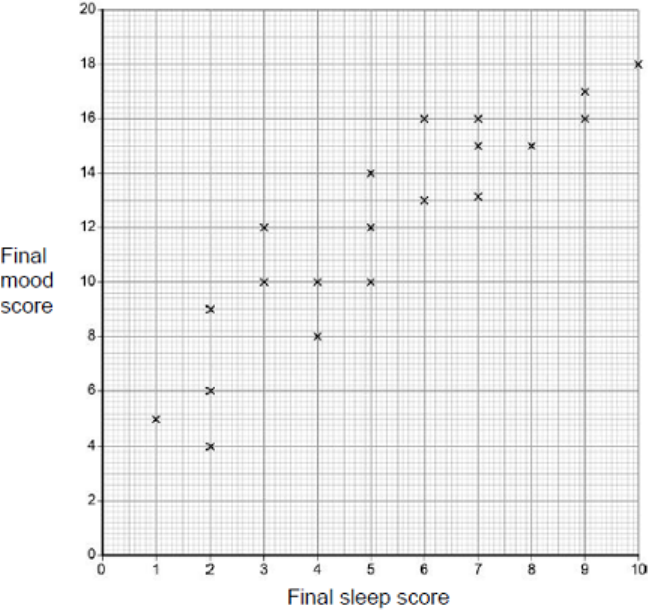

The data obtained are shown in the graph below.

Scattergram of children’s scores on a test of empathy and the average number of hours

spent reading fiction per week.

(a) Outline the relationship between empathy and the average number of hours spent reading

fiction per week shown in the graph above.

(1)

(b) Name an appropriate test to determine whether or not there is a significant relationship

between the two variables in the graph above. Justify your answer with reference to levels

of measurement.

(2)

The student teacher decided to use a two-tailed test.

Page 25 of 157

(c) Outline

one

way in which the student teacher could have assessed the validity of the

empathy questionnaire.

(2)

(d) Apart from the issue of validity, identify and briefly explain

one

methodological limitation of

the study.

(2)

(e) Explain why it was appropriate for the student teacher to use a correlation study rather than

an experiment.

(3)

(f) The student teacher noticed that some students on her course commented that they were

better able to recall information if they could read the information rather than listen to it in

lectures.

Design an experiment to test the following hypothesis:

‘People who are given written information will recall more than people who hear information

in spoken form.’

In your answer, you should refer to the following and justify your design decisions:

• the variables to be considered

• the experimental design to be used

• the sample

• relevant materials

• an outline of the proposed procedurr.

(8)

(Total 18 marks)

A psychodynamic psychologist wished to investigate the function of dreams. He asked five

friends to keep a ‘dream diary’ for a week by writing a descriptive account of their dreams as

soon as they woke up in the morning. He interpreted the content of their dreams as an

expression of their repressed wishes.

Referring to the study above, explain why psychodynamic psychologists have often been

criticised for neglecting the rules of the scientific approach.

(Total 3 marks)

22

Page 26 of 157

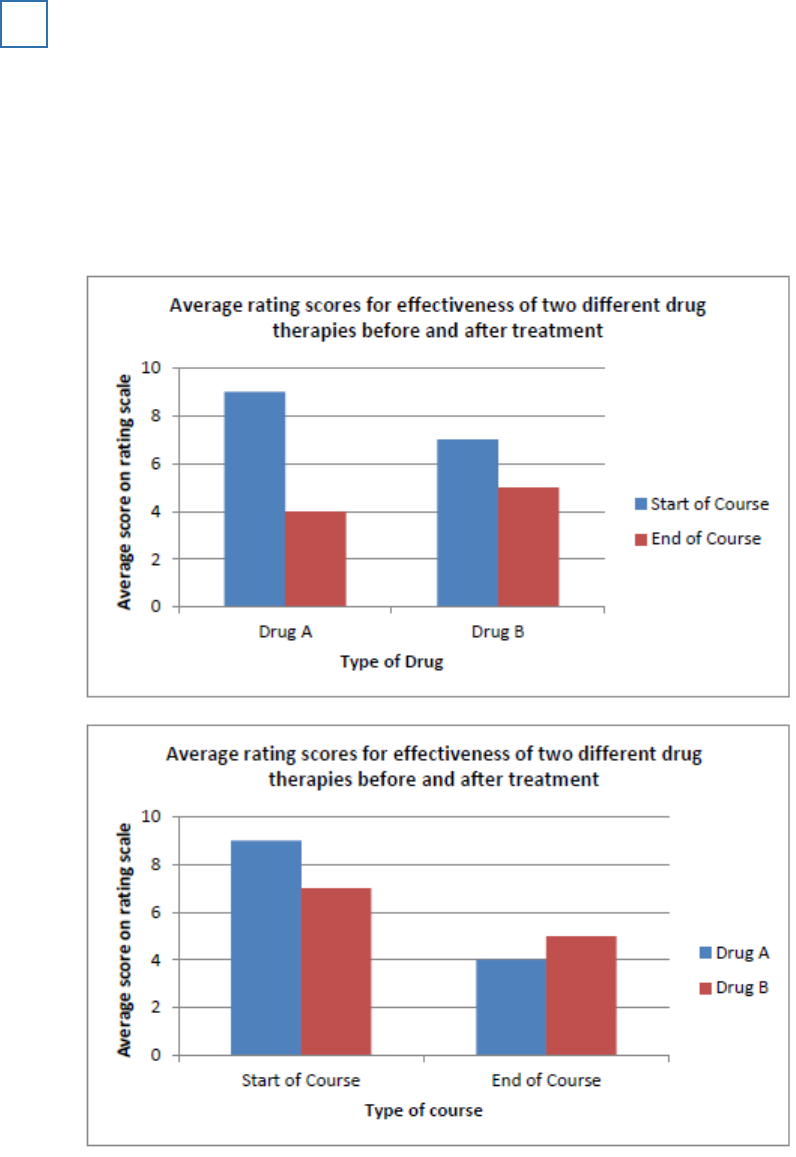

Two groups of patients took part in a trial to compare the effectiveness of two different drug

therapies. One of the groups was given

Drug A

and the other group was given

Drug B

. All

patients completed a rating scale at the start of a ten-week course of treatment and again at the

end of the course. This scale measured the severity of symptoms.

The

Drug A

group had an average score of 9 before the therapy and an average score of 4 at

the end of the course.

The

Drug B

group had an average score of 7 before the therapy and an average score of 5 at

the end of the course.

Sketch and label a bar chart to illustrate the data.

(Total 4 marks)

23

(a) Describe

one

way in which psychologists have investigated caregiver-infant interaction in

humans. Refer to a specific study in your answer.

(3)

24

(b) Evaluate the way of investigating caregiver-infant interaction that you have described in

your answer to part (a). Do

not

refer to ethical issues in your answer.

(3)

(Total 6 marks)

Page 27 of 157

A psychologist wanted to see whether or not there is a difference in the expectations that men

and women have of their own numeracy skills. She obtained a sample of 15 men and 15 women

from a factory. She conducted her study in two parts.

In the

first part

of the study, the psychologist said to each participant: “I want you to estimate

how many marks you think you will get on a maths test that is suitable for 14-year-old children. If

the test has a maximum score of 50, what mark do you think you will get?”

The psychologist recorded the estimate given by each participant and calculated the median

estimates for the men and for the women.

The results of the study are given in

Table 1

.

Table 1: Median estimated maths test scores for men and women

Median estimated maths test score

Men

31

Women

19

(a) Explain how a median score is calculated.

______________________________________________________________

______________________________________________________________

(1)

25

(b) Identify the dependent variable in this study.

______________________________________________________________

(1)

(c) Write a suitable hypothesis for this study.

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(2)

Page 28 of 157

(d) Identify and explain the experimental design used in this study.

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(2)

(e) Explain how the psychologist could have obtained a random sample of 15 men and a

random sample of 15 women for this study.

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(3)

Page 29 of 157

(f) What conclusion could the psychologist draw from the median estimated scores in

Table

1

? Justify your answer.

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(2)

In the

second part

of the study, each participant took a 30-minute maths test suitable for

14-year-old children. The test took place under examination conditions. The psychologist

marked the test. The maximum mark was 50.

The results of the maths test are given in

Table 2

.

Table 2: Median maths test scores for men and women

Median maths test score

Men

25

Women

25

(g) Taking the results from

both

parts of the study (Table 1 and Table 2), what can the

psychologist now conclude?

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(3)

Page 30 of 157

(h) After both parts of the study had been completed, the psychologist needed to debrief the

participants.

Write a debrief that the psychologist could read out to the participants.

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(4)

(i) This psychologist did not conduct a pilot study. Explain

one

reason why psychologists

sometimes conduct pilot studies.

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(2)

(Total 20 marks)

A researcher investigated whether people with obsessive-compulsive disorder (OCD) are more

aware of their own heartbeat than people who do not have OCD. A matched pairs design was

used. This involved 10 people with OCD and 10 people without OCD. The researcher asked

each participant to estimate how fast his or her heart was beating (in beats per minute) and this

was compared to his or her actual heartbeat. It was found that people with OCD were more

accurate at estimating their own heartbeat than people without OCD.

(a) Identify the independent variable in this study.

(1)

26

(b) This study is a quasi-experiment. Explain why this study is a quasi-experiment.

(2)

(c) The researcher used a matched pairs design. Identify

one

relevant variable that could have

been used to match participants in this study.

(1)

Page 31 of 157

(d) Outline

one

advantage of using a matched pairs design in this study.

(2)

(Total 6 marks)

In an observational study, 100 cars were fitted with video cameras to record the driver’s

behaviour. Two psychologists used content analysis to analyse the data from the films. They

found that 75% of accidents involved a lack of attention by the driver. The most common

distractions were using a hands-free phone or talking to a passenger. Other distractions included

looking at the scenery, smoking, eating, personal grooming and trying to reach something within

the car.

(a) What is content analysis?

(2)

27

(b) Explain how the psychologists might have carried out content analysis to analyse the film

clips of driver behaviour.

(4)

(c) Explain how the two psychologists might have assessed the reliability of their content

analysis.

The psychologists then designed an experiment to test the effects of using a hands-free

phone on drivers’ attention. They recruited a sample of 30 experienced police drivers and

asked them to take part in two computer-simulated driving tests. Both tests involved

watching a three-minute film of a road. Participants were instructed to click the mouse as

quickly as possible, when a potential hazard (such as a car pulling out ahead) was spotted.

Each participant completed two computer-simulated driving tests:

• Test A, whilst chatting with one of the psychologists on a hands-free phone

• Test B, in silence, with no distractions.

The order in which they completed the computer tests was counterbalanced.

(3)

(d) Explain why the psychologists chose to use a repeated measures design in this

experiment.

(3)

(e) Identify

one

possible extraneous variable in this experiment. Explain how this variable may

have influenced the results of this experiment.

(3)

(f) Explain

one or more

ethical issues that the psychologists should have considered in this

experiment.

(4)

Page 32 of 157

(g) Write a set of standardised instructions that would be suitable to read out to participants,

before they carry out Test A, chatting on a hands-free phone.

The computer simulator measured two aspects of driver behaviour:

• the number of hazards detected by each driver

• the time taken to respond to each hazard, in seconds.

The mean scores for each of these measures is shown in the table below.

Table to show the mean number of hazards detected and mean reaction times in

seconds for Test A and Test B

Mean scores

Test A: with

hands-free phone

Test B: in silence

Number of hazards

detected

26.0

23.0

Reaction time in

seconds

0.45

0.27

The psychologists then used an inferential statistical test to assess whether there was a

difference in the two conditions.

(5)

(h) Identify an appropriate statistical test to analyse the difference in the number of hazards

detected in the two conditions of this experiment. Explain why this test of difference would

be appropriate.

They found no significant difference in the number of hazards detected (p > 0.05), but there

was a significant difference in reaction times (p . 0.01).

(3)

(i) Explain why the psychologists did not think that they had made a Type 1 error in relation to

the difference in reaction times.

(2)

(j) Replication is one feature of the scientific method. The psychologists decided to replicate

this experiment using a larger sample of 250 inexperienced drivers.

Explain why replication of this study would be useful.

(3)

(Total 32 marks)

Page 33 of 157

Type A personality can be measured by using a questionnaire. Explain

two

strengths of using

questionnaires.

Strength One

_______________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

Extra space

________________________________________________________

___________________________________________________________________

Strength Two

_______________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

Extra space

________________________________________________________

___________________________________________________________________

(Total 4 marks)

28

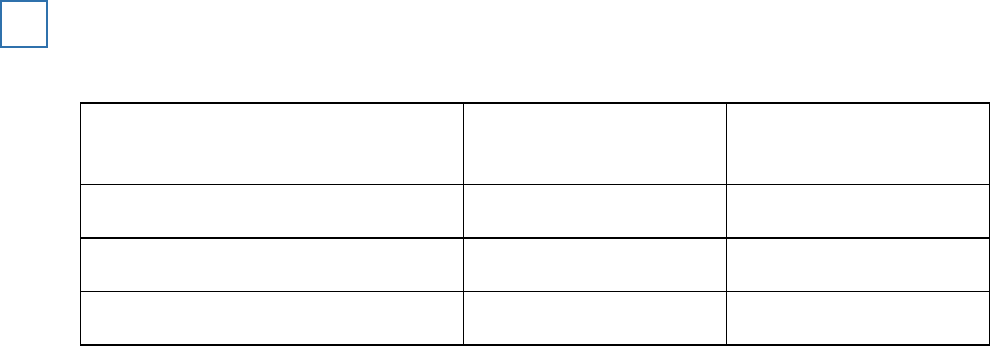

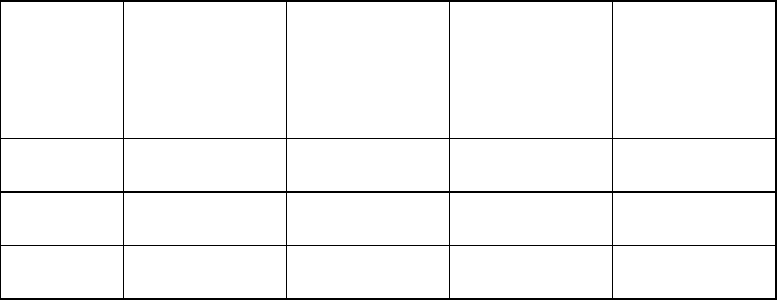

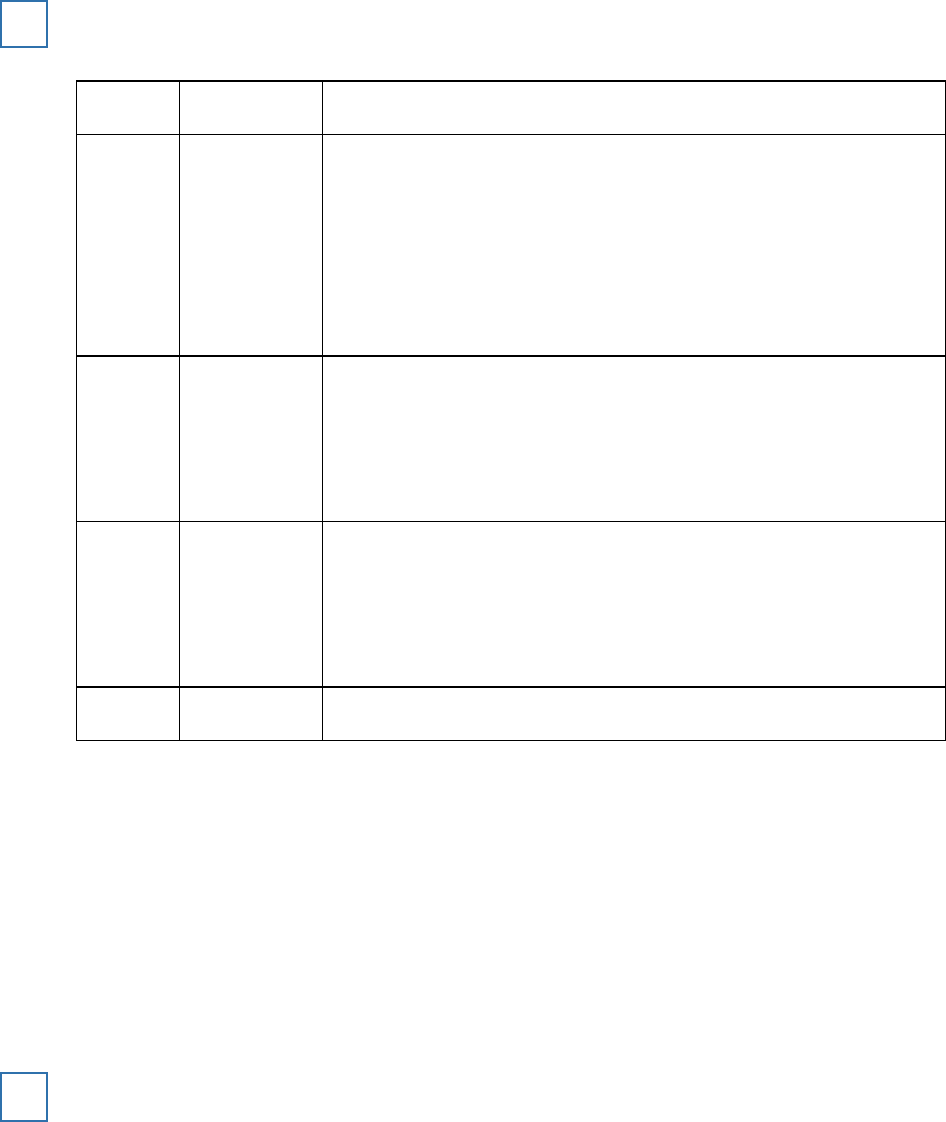

A psychologist was interested in the role of sensation-seeking in the development of addictive

behaviour. She tested ten participants addicted to smoking (Group A) and ten participants who

had no addictive behaviours (Group B). Each participant was given a questionnaire that

measured sensation-seeking. Scores on the questionnaire are given in the table below:

Sensation seeking scores for those with addictive behaviours and for those with no

addictions

29

Page 34 of 157

Group A

(Addicted to

smoking)

Score on

sensation-

seeking

questionnaire

Group B (No

addictive

behaviours)

Score on

sensation-

seeking

questionnaire

1

65

1

16

2

32

2

25

3

25

3

27

4

29

4

24

5

28

5

59

6

30

6

26

7

18

7

33

8

30

8

21

9

35

9

18

10

28

10

23

Median

Median

Complete the table by calculating the median and range for the two groups. Why did the

psychologist use the median rather than the mode?

(Total 4 marks)

Page 35 of 157

A researcher investigated obedience. The table shows the percentages of people who obeyed a

simple request from a confederate who was either smartly dressed or casually dressed.

Request

Smartly dressed

confederate

Casually dressed

confederate

Pick up some litter

80%

61%

Post a letter lying near a post box

61%

40%

Carry a heavy box up some stairs

30%

30%

What do these results suggest about obedience?

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

Extra space

________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

(Total 4 marks)

30

Page 36 of 157

Social influence research helps us to understand how it is possible to change people’s

behaviour: for example, understanding how to persuade people to eat more healthily.

With reference to this example of social change, explain how psychology might affect the

economy.

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

(Total 4 marks)

31

A psychologist wanted to investigate the effects of age of adoption on aggressive behaviour. He

compared children who had been adopted before the age of two with children who had been

adopted after the age of two. The children were observed in their school playground when they

were six years old.

(a) Suggest

two

operationalised behavioural categories the psychologist could use in his

observation of aggressive behaviour. Explain how the psychologist could have carried out

this observation.

Behavioural Category 1

_________________________________________

______________________________________________________________

Behavioural Category 2

_________________________________________

______________________________________________________________

Explanation of how the observation could have been carried out

_____

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(4)

32

Page 37 of 157

(b) Explain

one

ethical issue the psychologist would have needed to consider when carrying

out this research. How could the psychologist have dealt with this issue?

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

Extra space

___________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(4)

Page 38 of 157

The psychologist wanted to investigate how aggressive the children were when they were

at home. He interviewed a sample of their parents to investigate this.

(c) Explain why using interviews might be better than using questionnaires in this situation.

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

Extra space

___________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(4)

(Total 12 marks)

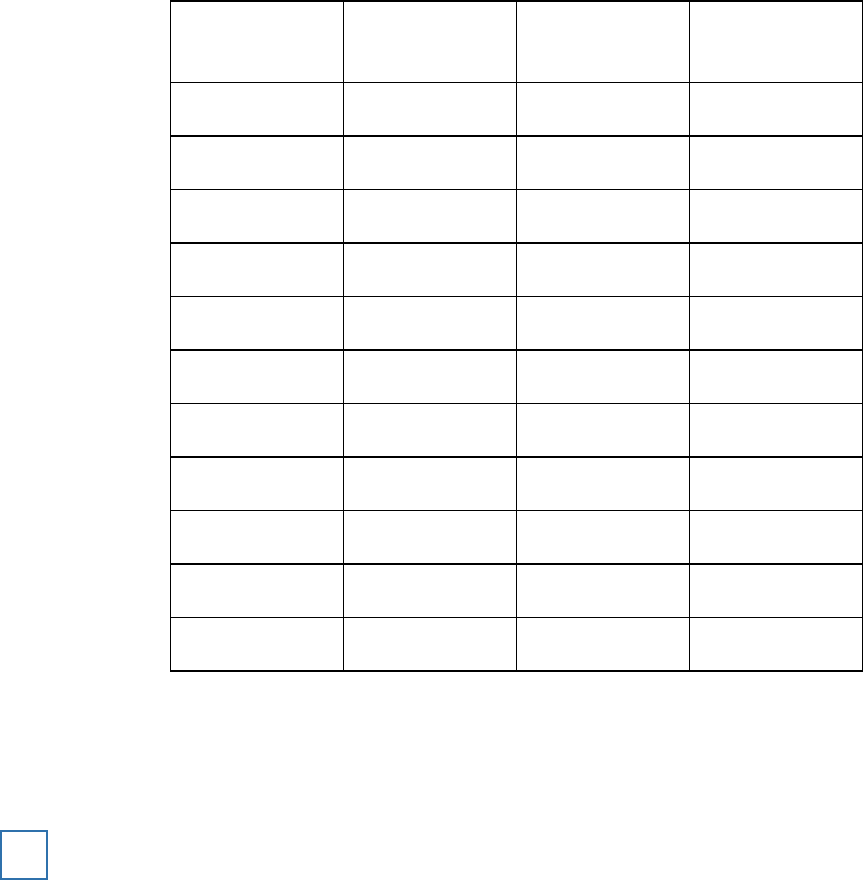

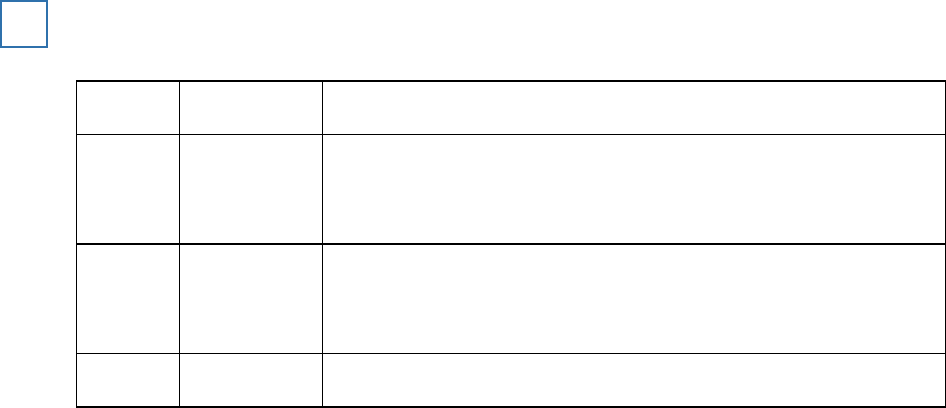

Prison staff compared two methods of managing anger in offenders. One group of offenders took

part in cognitive therapy. Another group of offenders took part in discussion therapy.

After one month following the training, levels of anger for each individual were rated by prison

staff on a scale of 0 – 100. The results are given in the table below:

Ratings of anger in offenders given either systematic CBT anger management training or

general advice

33

Page 39 of 157

Cognitive

Group

Anger rating

Discussion

group

Anger rating

1

37

1

44

2

45

2

22

3

23

3

74

4

17

4

36

5

41

5

66

6

32

6

63

7

27

7

44

8

26

8

81

9

38

9

56

10

52

10

45

Median

Median

Complete the table by calculating the median for the two groups. Show your working.

Why did the psychologist use the median as a measure of central tendency rather than the

mean?

(Total 4 marks)

A researcher investigated whether memory for words presented with pictures was better than

memory for words presented without pictures. The researcher used an independent groups

design.

In

Condition 1

, participants were given a limited time to learn a list of 20 words. They were then

asked to recall the 20 words in any order.

In

Condition 2

, participants were given the same time to learn the same 20 words, but this time

each word was presented with a picture. For example, the word ‘apple’ was presented alongside

a picture of an apple. They were then asked to recall the 20 words in any order.

34

Page 40 of 157

(a) A pilot study is a small-scale investigation carried out before the main study.

Explain why it would be appropriate for this researcher to use a pilot study. In your answer

you must refer to details of the experiment given above.

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

Extra space

___________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(4)

(b) State a non-directional hypothesis for this experiment.

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(2)

Page 41 of 157

(c) Explain

two

reasons why it was more appropriate to use an independent groups design

than a repeated measures design.

Reason 1

_____________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

Reason 2

_____________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(4)

The range and median number of words correctly recalled for participants shown

words without pictures and for participants shown words with pictures

Condition 1

Words without pictures

Condition 2

Words with pictures

Median number of

words correctly recalled

13

16

Range

11

13

(d) What do the scores in the table above show?

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(2)

After he had carried out the experiment, the researcher noticed that one participant in

Condition 1

had recalled all 20 words. The researcher thought that this participant might

have used a strategy for memory improvement, even though he had not been told to do so.

(Total 12 marks)

Page 42 of 157

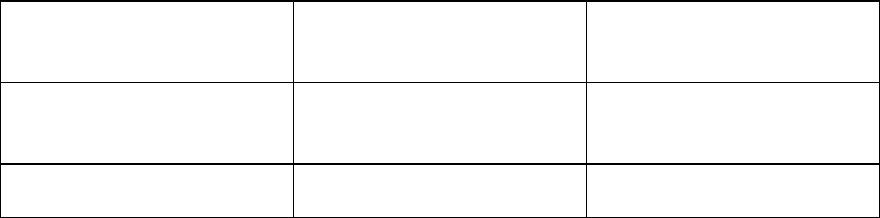

A psychologist was interested in the effects of violent computer games on aggression in young

boys. Following appropriate ethical procedures she set up a study in which she identified ten

boys who played violent computer games for at least two hours a day (Group A), and another

group of ten boys who did not play violent computer games (Group B). The boys were

systematically observed in their school playground on five separate occasions and the total

number of aggressive behaviours they demonstrated was recorded. The data are given in the

table below:

The effects of playing violent computer games on aggressive behaviour in boys

35

Group A

Number of

aggressive

acts

Group B

Number of

aggressive

acts

1

19

1

8

2

9

2

7

3

3

3

11

4

18

4

7

5

13

5

6

6

16

6

24

7

5

7

9

8

3

8

10

9

7

9

5

10

35

10

10

Median

Median

Complete the table by calculating the median for the two groups. Why did the psychologist use

the median as a measure of central tendency rather than the mean?

(Total 4 marks)

Page 43 of 157

Distinguish between a Type I error and a Type II error.

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

(Total 4 marks)

36

A researcher investigated the effectiveness of typical and atypical psychotics in schizophrenia

patients with either negative or positive symptoms.

Percentages of patients with either negative or positive symptoms, responding well to

typical or atypical antipsychotics.

37

Number of patients

responding well to

atypical

antipsychotics

Number of patients

responding well to

typical

antipsychotics

Patients with

negative symptoms

30

16

Patients with

positive symptoms

60

60

What does the data in the table seem to show about the effectiveness of typical and atypical

antipsychotics in the treatment of schizophrenia?

(Total 4 marks)

Read the item and then answer the questions that follow.

38

In a study of antisocial activity and social background, researchers interviewed

100 children aged 14 years. They then classified each child according to their

level of antisocial activity. They concluded that 26 were ‘very antisocial’, 40 were

‘mildly antisocial’ and 34 were ‘not antisocial’. The researchers found that the

majority of the ‘very antisocial’ children attended Crayford secondary school,

whereas most of the other two groups of children attended another local school.

Page 44 of 157

(a) The study on the opposite page is an example of socially sensitive research.

Briefly explain how the researchers could have dealt with the issue of social sensitivity in

this study.

(4)

(b) What level of measurement is being used in this study?

(1)

(c) Explain

one

limitation of the level of measurement you have identified in your answer to (b).

(2)

(Total 7 marks)

Briefly explain

one

reason why it is important for research to be replicated.

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

(Total 2 marks)

39

Imagine you have been asked to design a study to investigate possible gender differences in

card sorting behaviours. You decide you will ask participants to sort a shuffled pack of playing

cards into their suits of hearts, clubs, diamonds and spades. You decide you will time the

participants as they do this using a stop watch.

Discuss the following aspects of this investigation:

• with reference to the card sorting task, explain how you would ensure that this is made the

same task for all participants

•

one

methodological issue you should take into account when obtaining suitable

participants for this study and explain how you would deal with this issue

• how you would ensure that the experience of your participants is ethical.

(Total 9 marks)

40

Researchers studying male and female map reading ability calculated the following statistics

41

Map reading scores

Males

Females

Mean

15.4

5.25

Sd

2.70

2.22

Page 45 of 157

(a) What do the mean and standard deviation values suggest about the male and female

performances in the investigation?

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(4)

(b) In a replication of the part of the study in which map reading skills were investigated, 20

men and 20 women completed the original map reading task and the researchers obtained

the following data:

Male map reading scores

17, 20, 13, 12, 13, 11, 8, 17, 12, 15, 14,

18, 20, 17, 17, 15, 13, 10, 5, 9.

Female map reading scores

12, 8, 10, 11, 4, 2, 11, 18, 17, 12, 13, 10,

3, 15, 11, 9, 10, 11, 16, 10.

The mean map reading score for both groups together was 12.23.

What percentage of the male group scored above the mean score and what percentage of

the female group scored above the mean score? Show your calculations.

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(4)

Page 46 of 157

(c) Using your answers to both

question (a)

and

question (b)

, comment on the performances

of the male and the female participants in this study.

______________________________________________________________

______________________________________________________________

______________________________________________________________

______________________________________________________________

(2)

(Total 10 marks)

A psychologist wanted to test the effects of biological rhythms on the ability to solve maths

problems. She used random sampling to form two groups each of 20 students.

She tested one group on one set of maths problems at 3 am in the morning. The other group

were tested on another set of maths problems at 3 pm in the afternoon. She found that

performance of the group tested at 3 pm was significantly better than the group tested at 3 am.

When submitted for peer review the paper was rejected because of serious design problems.

42

Explain

one

problem with the design of this study and suggest ways of dealing with this problem.

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

___________________________________________________________________

(Total 4 marks)

Read the item and then answer the questions that follow.

Researchers were interested in the spatial awareness skills of motorists. They decided to

investigate a possible relationship between different aspects of spatial awareness. Motorists who

had between ten and twelve years of driving experience and held a clean driving licence with no

penalty points were asked to complete two sets of tasks.

43

Page 47 of 157

Set 1

: To follow a series of instructions and using a map, to identify various locations correctly.

This provided a map reading score for each motorist with a maximum score of 20.

Set 2

: To complete a series of practical driving tasks accurately. This involved tasks such as

driving between cones, driving within lines and parking inside designated spaces. Each motorist

was observed completing the

Set 2

tasks by a single trained observer who rated each

performance by giving the driver a rating out of 10.

The following results were obtained.

The map reading scores and driver ratings of motorists

Participant driver

Map reading score

Driver rating

1

17

9

2

8

4

3

15

7

4

12

6

5

3

2

6

4

4

7

6

8

8

14

6

9

19

10

(a) Should the hypothesis be directional? Explain your answer.